A durable broker and replayable AI runtime for governed agent orchestration.

DriftQ combines durable messaging with replayable workflows, policy and risk checks, human-in-the-loop approvals, secure tool execution, agent memory, and production-ready observability in one Go core.

docker run --rm \ -p 8080:8080 \ -v driftq-data:/data \ ghcr.io/driftq-org/driftq-core:1.3.0

driftqctl topics create --name demo --partitions 1

# produce

curl -X POST http://localhost:8080/v1/produce \

-H "content-type: application/json" \

-d '{"topic":"demo","value":"hello"}'

# consume (stream)

driftqctl topics peek --topic demo --group g1Embedded dashboard, same binary

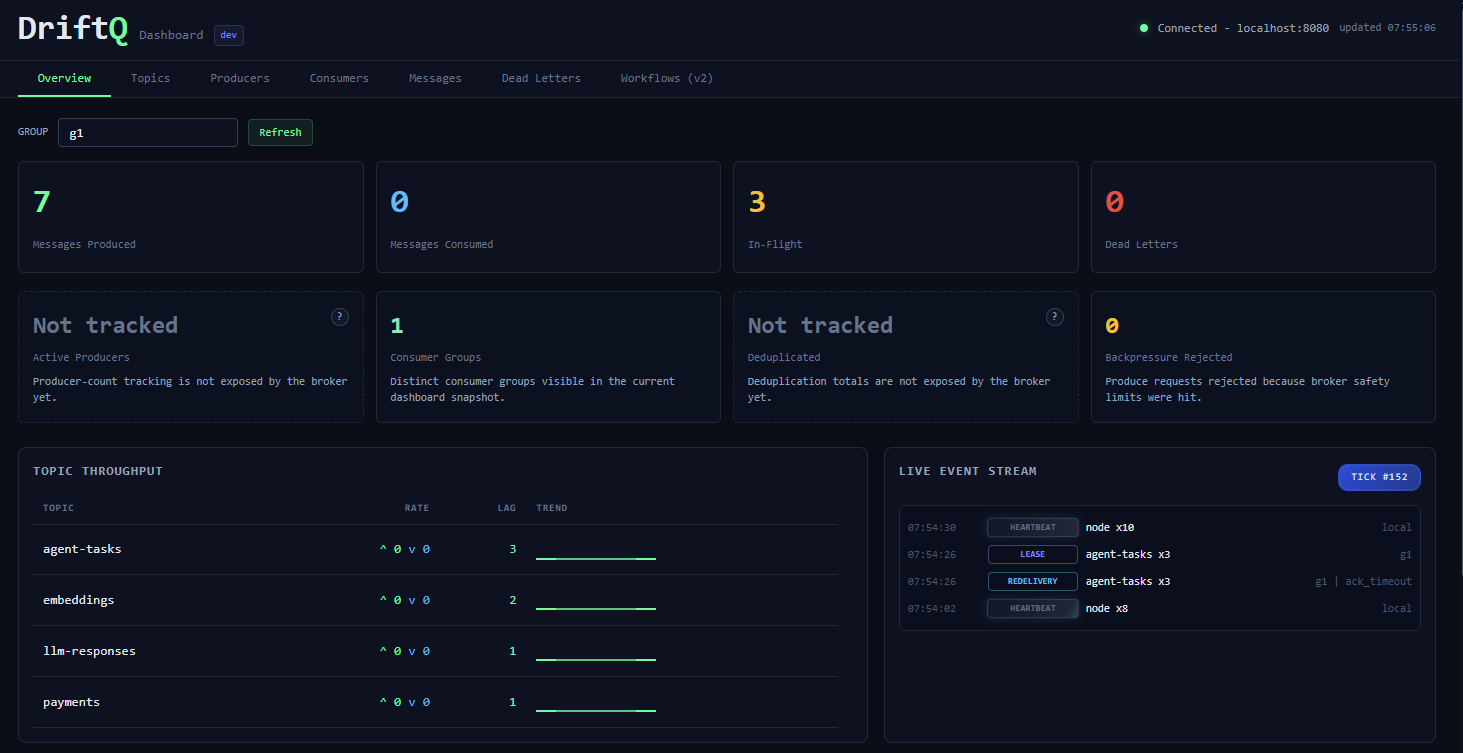

DriftQ-Core ships a built-in dashboard under /ui/. No separate service, no extra deploy, and no detached admin app to keep in sync.

- Served by the same

driftqdprocess as the API - Included in the Docker image and local Docker flow

- Covers overview, topics, runs, artifacts, runtime state, and debug surfaces

Run DriftQ, then open http://localhost:8080/ui/.

Runtime primitives that do not leak complexity

You should not have to rebuild durability, replay, governance, tool safety, and observability every time you ship an AI workflow. DriftQ keeps the hard parts explicit and the happy path fast.

- Durable topics + WAL-backed storage

- Replay, lineage, and what-if branch timelines

- Policy, risk, HITL, and tenant governance

- Tool gateway, receipts, and OpenTelemetry-native telemetry

Time-travel replay, run diffs, workflow diffs, root-cause views, and branching what-if simulations.

RBAC, policy checks, runtime risk scoring, approvals, edits, timeouts, and governed resume flows.

Approved tool registry, schema validation, secret redaction, tool-call audit logs, and staged side effects.

OTel traces, runtime metrics, broker telemetry, and traceable run, node, tool, and approval spans.

Failure is normal. Make recovery boring.

DriftQ is designed for workflows that touch flaky downstreams: LLMs, tools, third-party APIs, webhooks, and long-running jobs. The system should recover with evidence, not just dump the problem on your on-call.

Want a deeper tour? Start with Use Cases.

DriftQ keeps runs replayable and governed, so you can pause for humans, inspect lineage, branch a replay, or stage risky side effects without rebuilding runtime plumbing yourself.

Re-drive from the step that changed, compare alternate branches, and inspect what changed across runs.

Policy, risk scoring, HITL approvals, tenant boundaries, and secure tool execution are first-class.

Core telemetry is OpenTelemetry-native, so traces and metrics can flow into the rest of your stack.

Ship governed AI workflows without rebuilding your runtime stack.

If you already feel the pain of retries, tool safety, replay, runtime visibility, or human approvals, DriftQ is worth a serious look. If not, keep things simple until you need the trust layer.